Grades of Inductive Skepticism

Brian Skyrms, Wesley Salmon Memorial Lecture

October 25, 2013

Wes Salmon was one of the most loved of the philosophers of science of our local tradition. He was loved for his brilliance as a philosopher and for his kindness and humanity as a person. Each year we assemble for a lecture given in his honor.

This year, today, we assemble to hear Brian Skyrms speak in Wes' honor. When Brian and I had wandered off to find coffee the day before, I sympathized with the difficulty of giving a talk worthy of Wes. Brian said then that fortunately his choice of topic was limited. He must speak either on induction or Zeno. They are the areas in which both Wes and Brian have written. He chose induction. More specifically, he chose inductive skepticism.

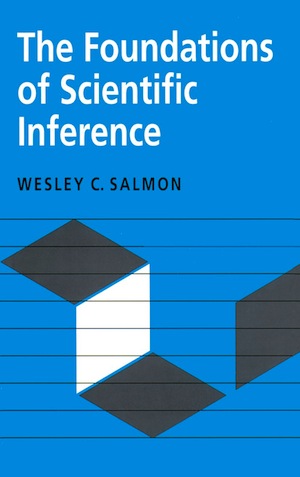

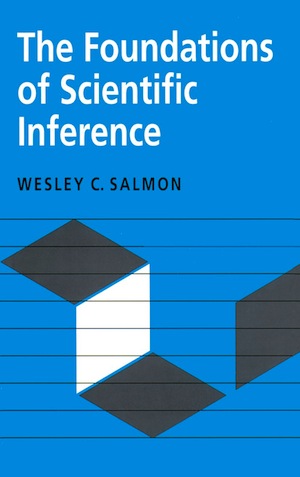

That is an excellent choice. There are a few works in philosophy of science that I find exemplary, even without peer. One of them is Salmon's 1966 Foundations of Scientific Inference. It provides an introduction to inductive inference in science and the use of Bayesian methods. The vehicle used to develop the material is Hume's celebrated problem of induction.

We cannot justify inductive inference, Hume argued in the modernized form Salmon presents, without in turn calling upon inductive inference. We use induction to justify induction. The project is circular and fatally so.

Salmon presents the problem and then provides us with an extensive list of the ways that philosophers over the ages have sought to escape the problem. Each escape is set up in beautifully clear and simple language, so that each time you are convinced of it. It is followed by prose that is just as simple and clear. In it, Salmon destroys the escape.

I still recall reading the book early in my philosophical career. It was exhilarating. I quickly discerned the structure: an escape and its defeat; an escape and its defeat...; and I know I will be convinced each time and then turned. Yet each time, the experience is fresh and exciting. This is philosophy at its best. It is a compelling analysis whose power is heightened by the simplicity of its words and transparency of its structure. I knew then that it had set a standard that I would forever seek to meet.

Brian's task today is to give a lecture worthy of the Salmon name. Would he?

This is an event, so there are formalities. First Sandra Mitchell stood to mark the occasion. She did her best to convey our affection for Wes to those who did not know him. She then pointed out that Wes' papers are in Hillman Library in Special Collections and that some are even digitized and available online. She had reveled in reading a syllabus in Wes' hand.

She planted a seed that has just sprouted. As soon as I was back in my office, I went to the site and found a folder marked "Foundations of Scientific Inference, 1953-1992" (Box 23, Folder 1). Its content had been digitized and I scrolled through, enthralled. Here was correspondence surrounding the volume, including letters from eager and famous fans. It included a form Wes filled out for the publisher, the University of Pittsburgh Press, that included a lovely synopsis of the work.

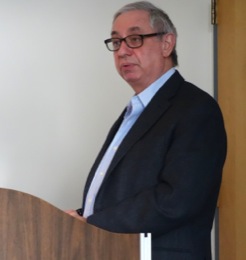

Jim Woodward had agreed to introduce Brian and oversee the proceedings. He read a list of Brian's accomplishments and then handed over the floor.

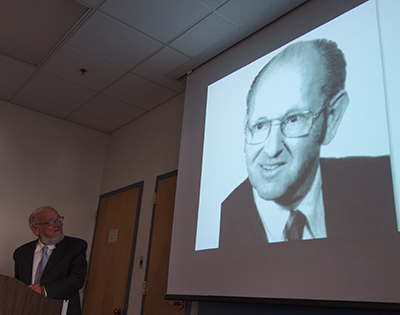

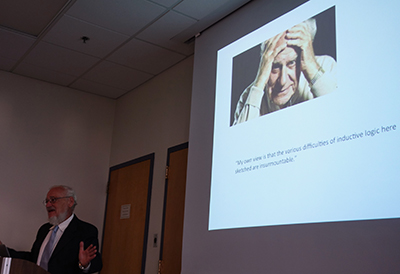

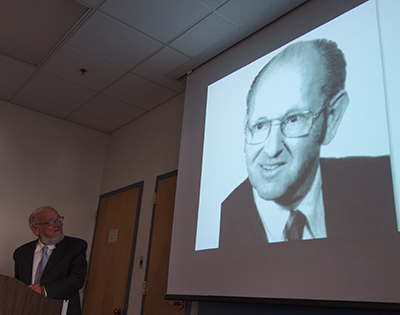

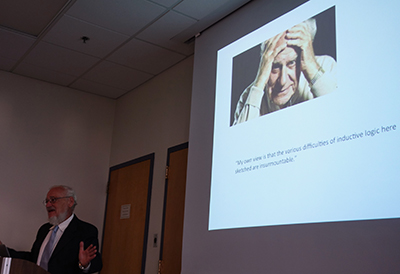

Brian rose to speak. He projected an image of Wes and explained his discomfort at having to find a talk worthy of the event. He said again that his choice was induction or Zeno, and he chose induction.

Now finally the real business of the day began. First came a brief and familiar reminder of Hume's problem. How can we demonstrate that the future will resemble the past?

"It is impossible, therefore, that any arguments from experience can prove this resemblance of the past to the future, since all these arguments are founded on the supposition of that resemblance."

What should we do? Should we follow Popper and just give up on induction? An image of a despairing Popper passed over the screen.

No, Brian continued, that was too quick. There's no reason to halt our skeptical demolition with inductive inference. The same tactics work against deductive inference. Two millennia ago, Agrippa had shown us how. Reason must be used to justify reason.

"It is a fool's game," Brian concluded, "to try to answer absolute skepticism."

It was a clear and lovely thought, but one that is completely obvious only when a far-sighted thinker points it out. It reminded me of a simple strategy I'd devised as a schoolboy to befuddle my friends. Whatever they said, I would look thoughtful and then say "Hmm... can you prove it?" I would try to get away with as many repetitions as possible. One target finally found the counter-algorithm. Every time I'd make the request, he would respond, thoughtfully, "Why....?"

Now Brian's project became clear. He would not even try to answer absolute skepticism. That is the fool's game. Rather, he would address a soluble problem. What if your skepticism is only partial? What if you grant something? Can your skepticism now be answered?

Where do we start? You might expect him to start by granting just a little and showing that allows just a little; and then adding more to what is granted to show that more can be gained. Brian did not do this. He started at the other end. He began by granting a lot. He took us to the birth of probability theory and then to Thomas Bayes' response to Hume. Bayes and Laplace after him had assumed a lot to get their results. They assumed, for example, a uniform distribution as their prior probability distributions.

What if we drop that uniformity? Can we still recover a probabilist's response to Humean skepticism? Yes we can, Brian showed, but we were already moving toward more sophisticated work that culminates in a general theorem proved by Doob in 1948.

The structure of Brian's talk now became apparent. He would take us repeatedly through a cycle. He would identify some element of the present escape from Hume's problem; and then relax it. Then he would show that the probabilist's response could be saved.

As the cycles repeated and the assumptions needed became sparer, I realized that he was not just answering skepticism. He was also giving a lovely historical synopsis of the growth of Bayesian methods. In each cycle, the loss of some assumption would be compensated by someone discovering an ingenious technical or mathematical innovation. That was why Brian recounted the project in reverse order, starting with escapes that presumed the most. For then his narrative would run in parallel with the history. As the cycles repeated and the assumptions needed became sparer, I realized that he was not just answering skepticism. He was also giving a lovely historical synopsis of the growth of Bayesian methods. In each cycle, the loss of some assumption would be compensated by someone discovering an ingenious technical or mathematical innovation. That was why Brian recounted the project in reverse order, starting with escapes that presumed the most. For then his narrative would run in parallel with the history.

This was a lecture worthy of the event. I had admired Salmon's writing on the problem of induction for its power and the comfort of its highly visible structure: escape-response-escape-response-...

Now I was hearing a lecture with the same simplicity of structure. Relaxation-compensation-relaxation-compensation-... The material Brian covered became increasingly sophisticated technically. But he was always at pains to ensure that his presentation remained simple, clear and informative.

This was talk that I'm sure Wes himself would have found praiseworthy.

Brian closed with a slide that read "thank you."

"No--thank you," I thought.

After a short break, Jim began to field the questions for Brian. Some were simply requests for elaboration. I had not fully understood Brian's narrative concerning invariant measures. He obliged by explaining it.

Others came from a direction I should have expected. Brian's account never really left the framework of Bayesian analysis. While I am no fan of its universal applicability, I have gotten used to the idea that it is a dominant view. Yet, to my surprise, there was no real sense of that in question time.

The weight of questioning reflected real skepticism about the Bayesian framework itself. Brian's presentation had been so powerful that it was difficult to find points to challenge. But challenge they did and I could hear from the tension in their voices that the challenge was hard to mount after such a carefully crafted talk.

Jim presented Brian an umbrella.

John D. Norton

|

![]()

![]()

![]()

![]()

![]()